Multi-Task Spacecraft Perception with a Lightweight Segmentation Model

Context

This post describes my approach to a multi-task spacecraft perception problem: building a single efficient model that can classify, detect, and segment spacecraft from RGB images, under strict efficiency limits (100 GFLOPs, 30M parameters).

30% of the final score comes from model efficiency. A smaller model that’s almost as accurate will beat a larger model that’s slightly better. This shaped basically every decision I made.

Note: this is all part of an ML competition organized in a workshop at a CV conference. Because of paper submission rules, I will keep the name of the competition and the workshop anonymous until the conference takes place. I will then also make the github repo public.

The Problem

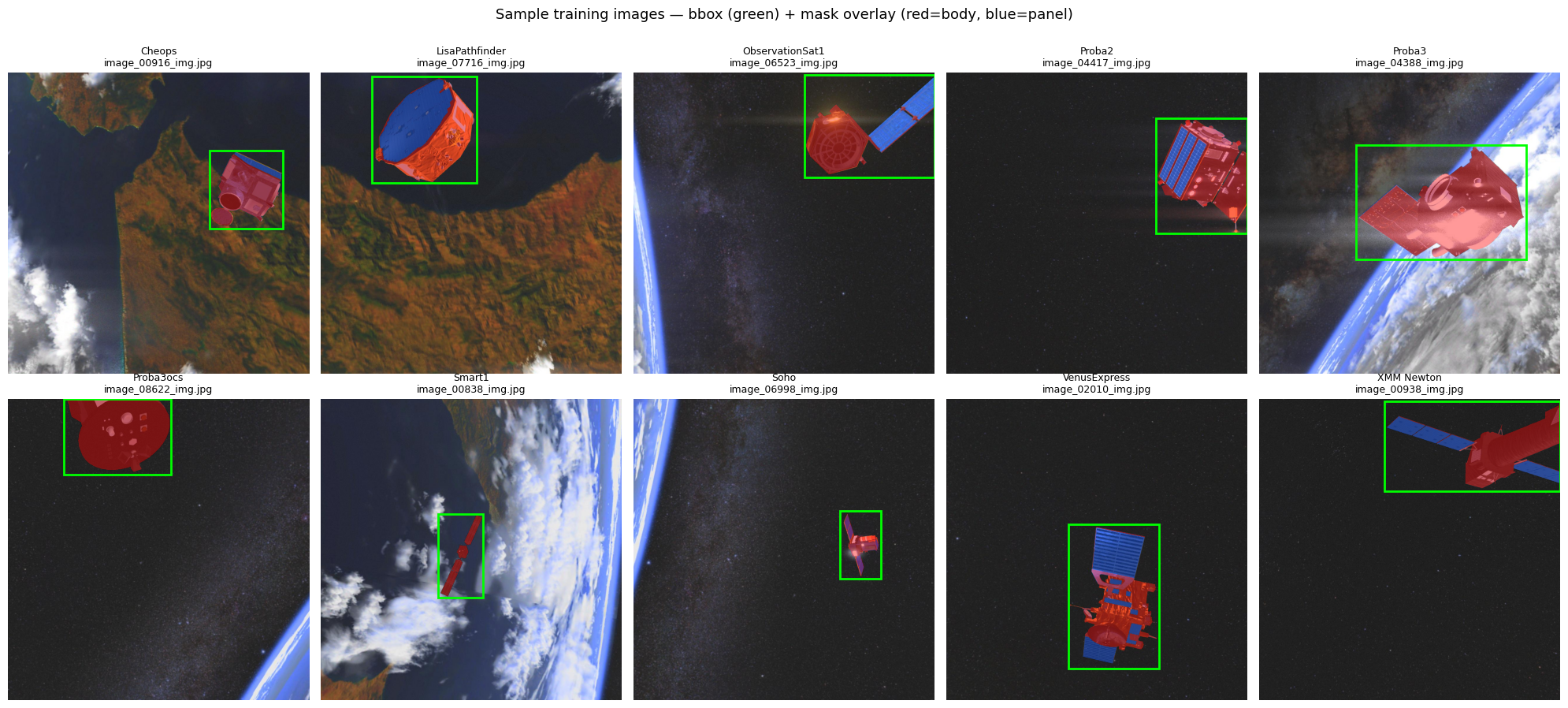

You get an RGB image of a spacecraft in space. From that single image, you need to:

- Classify the spacecraft type (10 classes: Cheops, Proba2, XMM-Newton, etc.)

- Detect it with a bounding box (COCO-style multi-threshold IoU)

- Segment its components — body and solar panel — at pixel level

The scoring formula is:

\[S_\text{final} = 0.70 \times S_\text{acc} + 0.15 \times S_\text{flops} + 0.15 \times S_\text{params}\]where the accuracy term weights segmentation and detection at 40% each, and classification at 20%.

The competition dataset consists of 80,000 images (60k train, 20k val) rendered at 1024×1024, with 10 balanced spacecraft classes against realistic space backgrounds. The test set was 5k images. Interestingly, the whole dataset was synthetically generated.

My Approach: Segmentation First

An important property of the task is that there is exactly one spacecraft per image, so only one bounding box needs to be predicted. This greatly simplifies detection. The classification task didn’t seem very difficult either, the leaderboard was full of submissions with 100% classification accuracy. Segmentation was therefore the task to focus on, and that’s why I built my model on top of segmentation architectures.

I started with a naive approach to bounding box prediction: if you can segment the spacecraft well, you can derive the bounding box by taking the min/max coordinates of the predicted mask pixels. Zero extra parameters, zero extra FLOPs.

The architecture:

Input image (512×512)

↓

[Pretrained Encoder]

├→ deepest features → [GAP → Dropout → Linear] → classification (10 classes)

└→ multi-scale features → [Decoder with skip connections]

↓

segmentation mask (2 channels: body + panel)

↓ at inference

[min/max of positive pixels] → bounding box (free!)

I built this on top of segmentation-models-pytorch, which made it easy to swap encoders and decoders from timm. The classification head is just global average pooling + dropout + a single linear layer branching off the encoder bottleneck, adding just a few parameters to the model.

Architecture Search: 15 Combinations

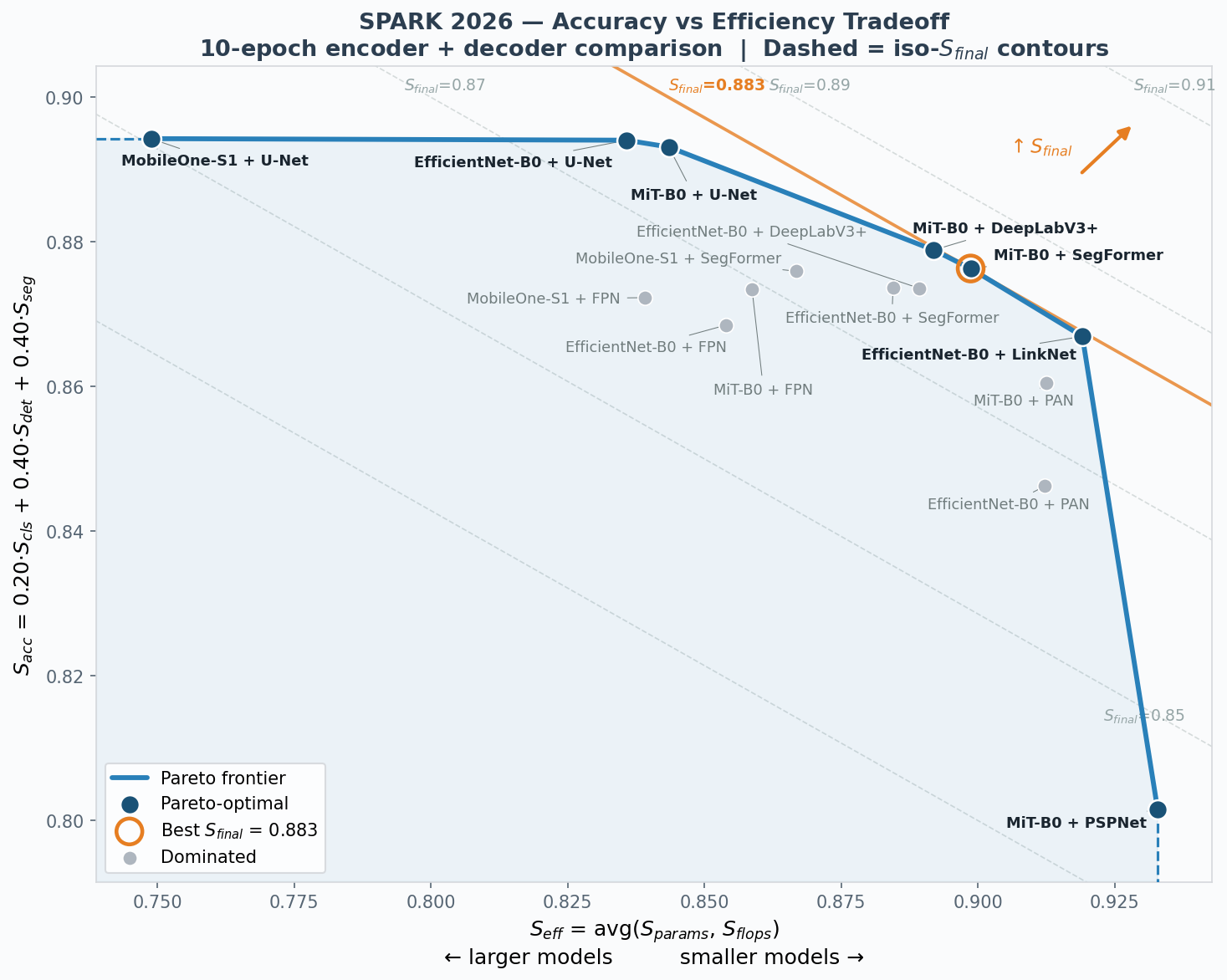

Before touching any hyperparameters, I wanted to understand which encoder-decoder combination gives the best accuracy-efficiency trade-off. I trained 15 combinations of 3 encoders × 5+ decoders for 10 epochs each with identical settings:

Encoders: EfficientNet-B0, MiT-B0, MobileOne-S1

Decoders: U-Net, SegFormer, DeepLabV3+, FPN, PAN, PSPNet, LinkNet

Classification turned out to be basically solved: every combination hit ≥99.9% accuracy in just 10 epochs (except PSPNet at 94.4%), so the real differentiator was segmentation quality. U-Net decoders produced the best masks, but they’re expensive: MobileOne-S1 + U-Net tied for the best raw accuracy (\(S_\text{acc} = 0.894\)) but its 19.7 GFLOPs and 9.16M parameters tank the efficiency score. MiT-B0 was the strongest encoder across the board. MiT-B0 + SegFormer landed at the “knee” of the Pareto frontier, with strong accuracy without paying too much in efficiency. MiT-B0 + DeepLabV3+ and EfficientNet-B0 + LinkNet were very close to the best.

Note: 10 epochs is not enough to fully converge the models, and this is a fair critique. I had only one GPU available, so that budget is what I chose to make the search feasible. I think it’s still a reasonable budget to get an early estimate of model capability. Something I should have done is run the subsequent hyperparameter search for the top 3 models instead of just one. Otherwise we can’t be confident we ended up with the optimal architecture.

Hyperparameter Tuning

With MiT-B0 + SegFormer as the base, I ran a four-phase hyperparameter search (28 runs total, 30 epochs each), carrying the best config forward from each phase:

Phase 1 — Learning rate. Higher LR = better. Going from \(\eta = 10^{-3}\) to \(2 \times 10^{-3}\) gave +0.8pp. I used a dual LR scheme where the pretrained encoder gets 10× lower learning rate than the decoder to avoid catastrophic forgetting of ImageNet features.

Phase 2 — Decoder width and resolution. Cutting decoder channels from 256 to 128 barely hurt accuracy but halved the decoder’s cost (+0.4pp in \(S_\text{final}\)). Higher resolution (768) pushes accuracy up but the FLOP penalty dominates.

Phase 3 — Augmentation and losses. Light augmentation (just flips and small rotations) beat medium and heavy augmentation consistently. The loss function — Dice + BCE + optional Lovász for segmentation, cross-entropy for classification — was robust to moderate reweighting. None of the loss variants beat the baseline.

Phase 4 — LR refinement. Pushed the learning rate further to \(5 \times 10^{-3}\) for another +0.4pp. Going higher (\(7 \times 10^{-3}\) or \(10^{-2}\)) caused training to diverge to NaN.

Total improvement from tuning: +1.6pp over the architecture search baseline.

Results

On the hidden test set, the final model achieves:

| \(S_\text{cls}\) | \(S_\text{det}\) | \(S_\text{seg}\) | \(S_\text{acc}\) | \(S_\text{final}\) | |

|---|---|---|---|---|---|

| Without TTA | 1.000 | 0.919 | 0.868 | 0.915 | 0.916 |

| With D4 TTA | 1.000 | 0.916 | 0.880 | 0.918 | 0.919 |

The model has 3.45M parameters and uses 4.48 GFLOPs. That’s roughly 10× under the parameter budget and 20× under the FLOP budget. Test-time augmentation (averaging predictions across 8 geometric transforms from the D4 symmetry group) helps segmentation at a slight detection cost, since averaging tends to shrink mask boundaries and produce tighter bounding boxes.

Bounding box regression

I also tried replacing the naive mask-derived bounding box with a regression head, which naturally makes sense. I added a GIoU loss term to the total loss function to provide a gradient signal for the regression head. This worked as expected on validation, where the detection score improved significantly compared to the naive approach. However, it underperformed on the test set, suggesting overfitting.

In every other submission, I observed very good correlation between validation and test scores. Due to this overfitting, I decided to stick with the naive approach. However, it’s clear that a bounding box regression head is fundamentally a better approach. Trained properly and with enough care to mitigate overfitting, I’m confident it would have performed well.

Unexplored ideas

A list of ideas I didn’t get to try:

- Using a detection loss during training as an auxiliary signal, while still deriving the actual bounding box from the mask at inference time. The intuition is that a GIoU or L1 loss on bounding box coordinates could provide additional gradient signal to the encoder.

- Using karpathy/autoresearch for automatic agentic architecture or hyperparameter search.

Training Details

- Optimizer: AdamW, weight decay \(10^{-4}\), gradient clipping at norm 1.0

- Schedule: Cosine annealing with 1-epoch linear warmup

- Input: 512×512 (predictions upsampled to 1024×1024 for submission)

- Loss: Dice + BCE for segmentation, cross-entropy for classification

- Hardware: Single GPU, mixed-precision (AMP) training

- Dataset normalization: mean=(0.27, 0.26, 0.28), std=(0.19, 0.19, 0.24) — space backgrounds are much darker than natural images, so these differ from ImageNet defaults

References

- Xie, E. et al. (2021). SegFormer: Simple and Efficient Design for Semantic Segmentation with Transformers. NeurIPS.

- Tan, M. & Le, Q. (2019). EfficientNet: Rethinking Model Scaling for Convolutional Neural Networks. ICML.

- Ronneberger, O. et al. (2015). U-Net: Convolutional Networks for Biomedical Image Segmentation. MICCAI.

- Vasu, P. K. A. et al. (2023). MobileOne: An Improved One Millisecond Mobile Backbone. CVPR.

- Chen, L.-C. et al. (2018). Encoder-Decoder with Atrous Separable Convolution for Semantic Image Segmentation (DeepLabV3+). ECCV.

- Lin, T.-Y. et al. (2017). Feature Pyramid Networks for Object Detection. CVPR.

- Liu, S. et al. (2018). Path Aggregation Network for Instance Segmentation. CVPR.

- Zhao, H. et al. (2017). Pyramid Scene Parsing Network (PSPNet). CVPR.

- Chaurasia, A. & Culurciello, E. (2017). LinkNet: Exploiting Encoder Representations for Efficient Semantic Segmentation. IEEE VCIP.

- Musallam, M. A. et al. (2021). SPARK: SPAcecraft Recognition leveraging Knowledge of Space Environment. ICIP.

- Berman, M. et al. (2018). The Lovász-Softmax loss: A tractable surrogate for the optimization of the intersection-over-union measure in neural networks. CVPR.

Enjoy Reading This Article?

Here are some more articles you might like to read next: